Note

New to DeepInverse? Get started with the basics with the 5 minute quickstart tutorial..

Uncertainty quantification with PnP-ULA.#

This code shows you how to use sampling algorithms to quantify uncertainty of a reconstruction from incomplete and noisy measurements.

ULA obtains samples by running the following iteration:

where \(z_k \sim \mathcal{N}(0, I)\) is a Gaussian random variable, \(\eta\) is the step size and \(\alpha\) is a parameter controlling the regularization.

The PnP-ULA method is described in the paper Laumont et al.[1].

import deepinv as dinv

from deepinv.utils.plotting import plot

import torch

from deepinv.utils import load_example

Load image from the internet#

This example uses an image of Messi.

device = dinv.utils.get_device()

x = load_example("messi.jpg", img_size=32).to(device)

Selected GPU 0 with 4759.25 MiB free memory

Define forward operator and noise model#

This example uses inpainting as the forward operator and Gaussian noise as the noise model.

sigma = 0.1 # noise level

physics = dinv.physics.Inpainting(mask=0.5, img_size=x.shape[1:], device=device)

physics.noise_model = dinv.physics.GaussianNoise(sigma=sigma)

# Set the global random seed from pytorch to ensure reproducibility of the example.

torch.manual_seed(0)

<torch._C.Generator object at 0x7f531dc29b90>

Define the likelihood#

Since the noise model is Gaussian, the negative log-likelihood is the L2 loss.

# load Gaussian Likelihood

likelihood = dinv.optim.data_fidelity.L2(sigma=sigma)

Define the prior#

The score a distribution can be approximated using Tweedie’s formula via the

deepinv.optim.ScorePrior class.

This example uses a pretrained DnCNN model.

From a Bayesian point of view, the score plays the role of the gradient of the

negative log prior

The hyperparameter sigma_denoiser (\(sigma\)) controls the strength of the prior.

In this example, we use a pretrained DnCNN model using the deepinv.loss.FNEJacobianSpectralNorm loss,

which makes sure that the denoiser is firmly non-expansive (see Terris et al.[2]), and helps to

stabilize the sampling algorithm.

sigma_denoiser = 2 / 255

prior = dinv.optim.ScorePrior(

denoiser=dinv.models.DnCNN(pretrained="download_lipschitz")

).to(device)

Create the MCMC sampler#

Here we use the Unadjusted Langevin Algorithm (ULA) to sample from the posterior defined in

deepinv.sampling.ULAIterator.

The hyperparameter step_size controls the step size of the MCMC sampler,

regularization controls the strength of the prior and

iterations controls the number of iterations of the sampler.

regularization = 0.9

step_size = 0.01 * (sigma**2)

iterations = int(5e3) if torch.cuda.is_available() else 10

params = {

"step_size": step_size,

"alpha": regularization,

"sigma": sigma_denoiser,

}

f = dinv.sampling.sampling_builder(

"ULA",

prior=prior,

data_fidelity=likelihood,

max_iter=iterations,

params_algo=params,

thinning=1,

verbose=True,

)

Generate the measurement#

We apply the forward model to generate the noisy measurement.

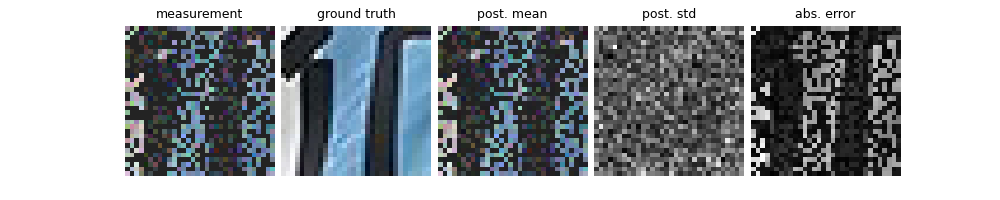

Run sampling algorithm and plot results#

The sampling algorithm returns the posterior mean and variance. We compare the posterior mean with a simple linear reconstruction.

mean, var = f.sample(y, physics)

# compute linear inverse

x_lin = physics.A_adjoint(y)

# compute PSNR

print(f"Linear reconstruction PSNR: {dinv.metric.PSNR()(x, x_lin).item():.2f} dB")

print(f"Posterior mean PSNR: {dinv.metric.PSNR()(x, mean).item():.2f} dB")

# plot results

error = (mean - x).abs().sum(dim=1).unsqueeze(1) # per pixel average abs. error

std = var.sum(dim=1).unsqueeze(1).sqrt() # per pixel average standard dev.

imgs = [x_lin, x, mean, std / std.flatten().max(), error / error.flatten().max()]

plot(

imgs,

titles=["measurement", "ground truth", "post. mean", "post. std", "abs. error"],

)

0%| | 0/5000 [00:00<?, ?it/s]

2%|▏ | 84/5000 [00:00<00:05, 833.91it/s]

4%|▎ | 180/5000 [00:00<00:05, 903.53it/s]

6%|▌ | 285/5000 [00:00<00:04, 968.85it/s]

8%|▊ | 391/5000 [00:00<00:04, 1000.99it/s]

10%|▉ | 497/5000 [00:00<00:04, 1018.82it/s]

12%|█▏ | 603/5000 [00:00<00:04, 1029.44it/s]

14%|█▍ | 708/5000 [00:00<00:04, 1035.84it/s]

16%|█▋ | 814/5000 [00:00<00:04, 1041.64it/s]

18%|█▊ | 920/5000 [00:00<00:03, 1046.01it/s]

20%|██ | 1025/5000 [00:01<00:03, 1043.18it/s]

23%|██▎ | 1130/5000 [00:01<00:03, 1029.10it/s]

25%|██▍ | 1233/5000 [00:01<00:03, 1016.56it/s]

27%|██▋ | 1335/5000 [00:01<00:03, 1010.42it/s]

29%|██▊ | 1437/5000 [00:01<00:03, 1006.02it/s]

31%|███ | 1538/5000 [00:01<00:03, 994.76it/s]

33%|███▎ | 1638/5000 [00:01<00:03, 996.00it/s]

35%|███▍ | 1739/5000 [00:01<00:03, 998.42it/s]

37%|███▋ | 1840/5000 [00:01<00:03, 999.26it/s]

39%|███▉ | 1941/5000 [00:01<00:03, 1000.33it/s]

41%|████ | 2042/5000 [00:02<00:02, 1001.89it/s]

43%|████▎ | 2143/5000 [00:02<00:02, 1000.53it/s]

45%|████▍ | 2244/5000 [00:02<00:02, 999.95it/s]

47%|████▋ | 2344/5000 [00:02<00:02, 999.72it/s]

49%|████▉ | 2445/5000 [00:02<00:02, 1000.38it/s]

51%|█████ | 2546/5000 [00:02<00:02, 1001.09it/s]

53%|█████▎ | 2647/5000 [00:02<00:02, 1000.78it/s]

55%|█████▍ | 2748/5000 [00:02<00:02, 1001.43it/s]

57%|█████▋ | 2849/5000 [00:02<00:02, 1000.44it/s]

59%|█████▉ | 2950/5000 [00:02<00:02, 1000.63it/s]

61%|██████ | 3051/5000 [00:03<00:01, 1001.34it/s]

63%|██████▎ | 3152/5000 [00:03<00:01, 1001.08it/s]

65%|██████▌ | 3253/5000 [00:03<00:01, 999.81it/s]

67%|██████▋ | 3353/5000 [00:03<00:01, 999.41it/s]

69%|██████▉ | 3454/5000 [00:03<00:01, 1001.23it/s]

71%|███████ | 3555/5000 [00:03<00:01, 1002.45it/s]

73%|███████▎ | 3656/5000 [00:03<00:01, 1002.12it/s]

75%|███████▌ | 3757/5000 [00:03<00:01, 1003.02it/s]

77%|███████▋ | 3858/5000 [00:03<00:01, 1001.75it/s]

79%|███████▉ | 3959/5000 [00:03<00:01, 999.82it/s]

81%|████████ | 4060/5000 [00:04<00:00, 1001.24it/s]

83%|████████▎ | 4161/5000 [00:04<00:00, 1001.02it/s]

85%|████████▌ | 4262/5000 [00:04<00:00, 1002.44it/s]

87%|████████▋ | 4363/5000 [00:04<00:00, 1000.85it/s]

89%|████████▉ | 4464/5000 [00:04<00:00, 1002.09it/s]

91%|█████████▏| 4565/5000 [00:04<00:00, 1001.68it/s]

93%|█████████▎| 4666/5000 [00:04<00:00, 1002.53it/s]

95%|█████████▌| 4767/5000 [00:04<00:00, 1002.19it/s]

97%|█████████▋| 4868/5000 [00:04<00:00, 1002.30it/s]

99%|█████████▉| 4969/5000 [00:04<00:00, 1003.22it/s]

100%|██████████| 5000/5000 [00:04<00:00, 1003.57it/s]

Iteration 4999, current converge crit. = 1.43E-05, objective = 1.00E-03

Iteration 4999, current converge crit. = 3.42E-04, objective = 1.00E-03

Linear reconstruction PSNR: 8.55 dB

Posterior mean PSNR: 22.31 dB

- References:

Total running time of the script: (0 minutes 5.418 seconds)