NCSNpp#

- class deepinv.models.NCSNpp(model_type='ncsn', precondition_type='edm', img_resolution=64, in_channels=3, out_channels=3, label_dim=0, augment_dim=9, model_channels=128, channel_mult=(1, 2, 2, 2), channel_mult_emb=4, num_blocks=4, attn_resolutions=(16,), dropout=0.10, label_dropout=0.0, pretrained='download', _was_trained_on_minus_one_one=False, pixel_std=0.75, device=None, **kwargs)[source]#

Bases:

DenoiserImplementation of the DDPM++ and NCSN++ UNet architectures.

Equivalent to the original implementation by Song et al.[1], available at the official implementation. The DDPM model was originally built for the VP-SDE from Song et al.[1] while the NCSN++ model was originally built with the VE-SDE. See the diffusion SDE implementations for more details on the VP-SDE and VE-SDE from Song et al.[1]. The model is also pre-conditioned by the method described in Karras et al.[2].

The architecture consists of a series of convolution layer, down-sampling residual blocks and up-sampling residual blocks with skip-connections of scale \(\sqrt{0.5}\). The model also supports an additional class condition model. Each residual block has a self-attention mechanism with multiple channels per attention head. The noise level can be embedded using either Positional Embedding or Fourier Embedding with optional augmentation linear layer.

- Parameters:

model_type (str) –

Model type, which defines the architecture and embedding types. Options are:

'ncsn'for the NCSN++ architecture: the following arguments will be ignored and set toembedding_type='fourier',channel_mult_noise=2,encoder_type='residual',decoder_type='standard',resample_filter=[1,3,3,1].'ddpm'for the DDPM++ architecture: the following arguments will be ignored and set toembedding_type='positional',channel_mult_noise=1,encoder_type='standard',decoder_type='standard',resample_filter=[1,1].

Default is

'ncsn'.precondition_type (str) – Input preconditioning for denoising. Can be ‘edm’ for the method from Karras et al.[2] or ‘baseline_ve’ for the original method from Song et al.[1]. See Table 1 from Karras et al.[2] for more details.

img_resolution (int) – Image spatial resolution at input/output.

in_channels (int) – Number of color channels at input.

out_channels (int) – Number of color channels at output.

label_dim (int) – Number of class labels, 0 = unconditional.

augment_dim (int) – Augmentation label dimensionality, 0 = no augmentation.

model_channels (int) – Base multiplier for the number of channels.

channel_mult (list) – Per-resolution multipliers for the number of channels.

channel_mult_emb (int) – Multiplier for the dimensionality of the embedding vector.

num_blocks (int) – Number of residual blocks per resolution.

attn_resolutions (list) – List of resolutions with self-attention.

dropout (float) – Dropout probability of intermediate activations.

label_dropout (float) – Dropout probability of class labels for classifier-free guidance.

embedding_type (str) – Timestep embedding type:

'positional'for DDPM++,'fourier'for NCSN++.channel_mult_noise (int) – Timestep embedding size: 1 for DDPM++, 2 for NCSN++.

encoder_type (str) – Encoder architecture:

'standard'for DDPM++,'residual'for NCSN++.decoder_type (str) – Decoder architecture:

'standard'for both DDPM++ and NCSN++.resample_filter (list) – Resampling filter:

[1,1]for DDPM++,[1,3,3,1]for NCSN++.pretrained (str | None) –

Use pretrained weights (or a path to custom weights).

If

pretrained is None, the weights are initialized randomly using PyTorch’s default initialization.pretrained='edm-ffhq64-64x64-uncond-ve'loads NCSN++ weights from Karras et al.[2], trained on FFHQ 64x64 with the EDM diffusion schedule (see Table 1 in Karras et al.[2]).pretrained='edm-cifar10-32x32-uncond-ve'loads NCSN++ weights from Karras et al.[2], trained on CIFAR-10 32x32 with the EDM diffusion schedule.pretrained='edm-ffhq-64x64-uncond-vp'loads DDPM++ weights from Karras et al.[2], trained on FFHQ 64x64 with the EDM diffusion schedule.pretrained='edm-cifar10-32x32-uncond-vp'loads DDPM++ weights from Karras et al.[2], trained on CIFAR-10 32x32 with the EDM diffusion schedule.pretrained='baseline-ffhq-64x64-uncond-ve'loads NCSN++ weights from Song et al.[1], trained on FFHQ 64x64 with the VE-SDE diffusion schedule.pretrained='baseline-cifar10-32x32-uncond-ve'loads NCSN++ weights from Song et al.[1], trained on CIFAR-10 32x32 with the VE-SDE diffusion schedule.pretrained='download'is a convenience alias: ifmodel_type='ncsn'(default) it maps to'edm-ffhq-64x64-uncond-ve', and ifmodel_type='ddpm'it maps to'edm-ffhq-64x64-uncond-vp'.pretrainedmay also be a filesystem path to user-provided weights; the model is assumed to be trained on pixels in[0, 1]—if trained on[-1, 1], setmodel._was_trained_on_minus_one_one = Trueafter loading.

See pretrained-weights for more details.

_was_trained_on_minus_one_one (bool) – Indicate whether the model has been trained on

[-1, 1]pixels or[0, 1]pixels. Default toFalse.pixel_std (float) – The standard deviation of the normalized pixels (to

[0, 1]for example) of the data distribution. Default to0.75.device (torch.device) – Instruct our module to be either on cpu or on gpu. Default to

None, which suggests working on cpu.

- References:

- forward(x, sigma, class_labels=None, augment_labels=None, input_in_minus_one_one=False, *args, **kwargs)[source]#

Run the denoiser on noisy image.

- Parameters:

x (torch.Tensor) – noisy image

sigma (Union[torch.Tensor, float]) – noise level

class_labels (torch.Tensor) – class labels

augment_labels (torch.Tensor) – augmentation labels

input_in_minus_one_one (bool) – whether the input

xis in[-1, 1]range. Default isFalse.

- Return torch.Tensor:

denoised image.

- forward_unet(x, sigma, class_labels=None, augment_labels=None)[source]#

Run the unet.

- Parameters:

x (torch.Tensor) – noisy image

sigma (Union[torch.Tensor, float]) – noise level

class_labels (torch.Tensor) – class labels

augment_labels (torch.Tensor) – augmentation labels

- Return torch.Tensor:

denoised image.

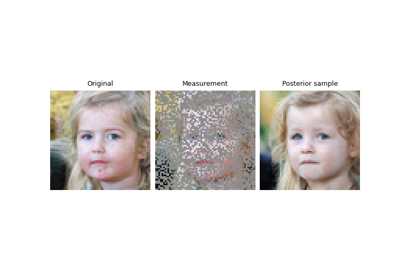

Examples using NCSNpp:#

Building your diffusion posterior sampling method using SDEs