MLEM#

- class deepinv.optim.MLEM(data_fidelity=None, prior=None, lambda_reg=1.0, g_param=None, sigma_denoiser=None, max_iter=100, crit_conv='residual', thres_conv=1e-5, early_stop=False, custom_metrics=None, custom_init=None, unfold=False, trainable_params=None, cost_fn=None, params_algo=None, **kwargs)[source]#

Bases:

BaseOptimMaximum Likelihood Expectation Maximization (MLEM) algorithm for Poisson inverse problems.

This algorithm Shepp and Vardi[1] was originally proposed for deconvolution by Richardson and Lucy Richardson[2], Lucy[3] and was later adapted to tomographic reconstruction by Shepp and Vardi Shepp and Vardi[1]. It is also widely used in Non-Negative Matrix Factorization (NMF) problems where it is known as the Lee and Seung multiplicative update algorithm Lee and Seung[4].

The algorithm is traditionally derived from the Expectation-Maximization (EM) framework with specific latent variables. Alternatively, it can be seen as a Majorization-Minimization (MM) algorithm where each iteration consists in constructing a surrogate function that majorizes the Poisson negative log-likelihood and then minimizing this surrogate function. At each iteration, the algorithm performs a multiplicative update of the form:

\[x_{k+1} = \frac{x_k}{A^T \mathbf{1}} \odot A^T \left(\frac{y}{A x_k}\right)\]where \(A\) is the forward operator, \(y\) is the observed data, \(\mathbf{1}\) is a tensor of ones, and \(\odot\) denotes element-wise multiplication.

The algorithm can be used with a prior term (e.g., for MAP-EM variants) or without (standard MLEM). See

deepinv.optim.optim_iterators.MLEMIterationfor the details of the iteration.The MLEM algorithm minimizes the Poisson negative log-likelihood data-fidelity. The

data_fidelityargument can be used to measure progress during optimization (e.g., for early stopping or metrics computation), but it is not used as the objective function to minimize. Only the Poisson negative log-likelihood is minimized regardless of the provideddata_fidelityargument.A regularization can be included via the

priorargument, which will lead to a MAP-EM variant of the MLEM algorithm. Our implementation is based on the One-Step-Late (OSL) heuristic of Green Green[5]. It leads to the following update rule:\[x_{k+1} = \frac{x_k}{A^T \mathbf{1} + \lambda \nabla g(x_k)} \odot A^T \left(\frac{y}{A x_k}\right)\]where \(g\) is the prior function and \(\lambda\) is the regularization parameter.

In the case of a non-differentiable prior, the gradient term \(\nabla g(x_k)\) is replaced by a subgradient:

\[x_{k+1} = \frac{x_k}{A^T \mathbf{1} + \lambda \partial g(x_k)} \odot A^T \left(\frac{y}{A x_k}\right)\]where \(\partial g(x_k)\) is a subgradient of \(g\) at point \(x_k\).

- Parameters:

data_fidelity (deepinv.optim.DataFidelity, list[DataFidelity]) – data fidelity term. If

None, the data fidelity term is not used. Default:None.prior (deepinv.optim.Prior, list[Prior]) – prior term. If

None, no prior is used. Default:None.lambda_reg (float) – regularization parameter \(\lambda\). Default:

1.0.g_param (float) – parameter for the prior. Default:

None.sigma_denoiser (float) – same as

g_param. If bothg_paramandsigma_denoiserare provided,g_paramis used. Default:None.max_iter (int) – maximum number of iterations. Default:

100.crit_conv (str) – convergence criterion, either

"residual"or"cost". Default:"residual".thres_conv (float) – convergence threshold. Default:

1e-5.early_stop (bool) – if

True, the algorithm stops when the convergence criterion is met. Default:False.custom_metrics (dict) – dictionary of custom metrics to compute at each iteration. Default:

None.custom_init (Callable) – custom initialization function. Default:

None.unfold (bool) – whether to unfold the algorithm or not. Default:

False.trainable_params (list) – list of ADMM parameters to be trained if

unfoldis True. To choose between["lambda", "stepsize", "g_param", "beta"]. Default: None, which means that all parameters are trainable ifunfoldis True. For no trainable parameters, set to an empty list.cost_fn (Callable) – Custom user input cost function.

cost_fn(x, data_fidelity, prior, cur_params, y, physics)takes as input the current primal variable (torch.Tensor), the current data-fidelity (deepinv.optim.DataFidelity), the current prior (deepinv.optim.Prior), the current parameters (dict), and the measurement (torch.Tensor). Default:None.params_algo (dict) – optionally, directly provide the ADMM parameters in a dictionary. This will overwrite the parameters in the arguments

stepsize,lambda_reg,g_paramandbeta.

- References:

Examples using MLEM:#

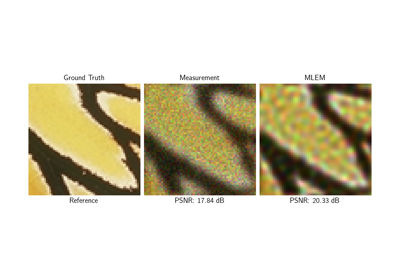

Poisson Inverse Problems with Maximum-Likelihood Expectation-Maximization (MLEM)